Lunes, Oktubre 31, 2011

Google vs Yahoo Graph

Search Engine Position Checker

Source: http://www.webmaster-toolkit.com/search-engine-position-checker.shtml

seo tools seo tool land seo leaders online traffic mystic good backlink

Google Rankings

Source: http://www.googlerankings.com

use seo seo tools seo tool land seo leaders online traffic mystic

Enhance the Subscriber List to Your Newsletter

Source: http://www.trafficmystic.com/216/enhance-the-subscriber-list-to-your-newsletter/

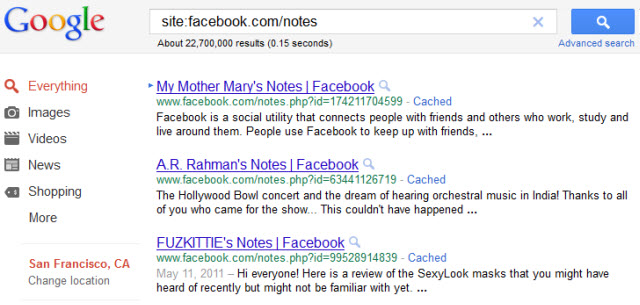

Advanced Google Search Methods

Meta Tag Generator

Linggo, Oktubre 30, 2011

Page Rank Search

Source: http://www.prsearch.net/index.php

seo tools seo tool land seo leaders online traffic mystic good backlink

Future PageRank Tool

Source: http://www.seochat.com/seo-tools/future-pagerank/

use seo seo tools seo tool land seo leaders online traffic mystic

Multi DC PageRank Checker

Source: http://www.seobench.com/multi-dc-pr-check/

seo tools seo tool land seo leaders online traffic mystic good backlink

Five Tips for Your Online Promotional Strategy

Source: http://www.seo-leaders-online.co.za/seo/five-tips-for-your-online-promotional-strategy/

seo tool land seo leaders online traffic mystic good backlink see book

Sabado, Oktubre 29, 2011

MSN Position Search

Niche Finder

Source: http://www.wordstream.com/keyword-niche-finder/

link building use seo seo tools seo tool land seo leaders online

eCommerce SEO? Google AdWords or No Soup for You

Affiliates Are a Dying Breed

Being an ecommerce affiliate keeps getting harder & harder unless you have a strong brand and/or are selling things with a complex sales cycle.

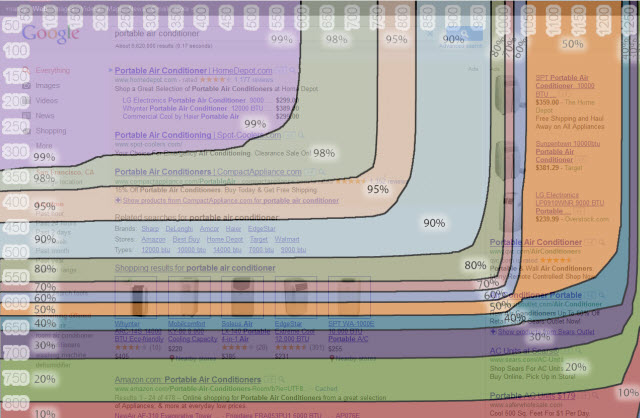

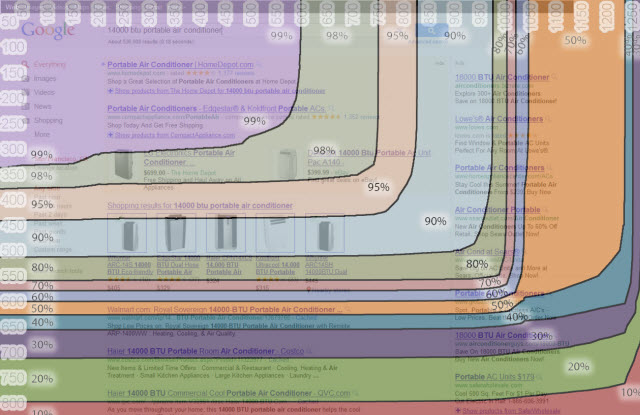

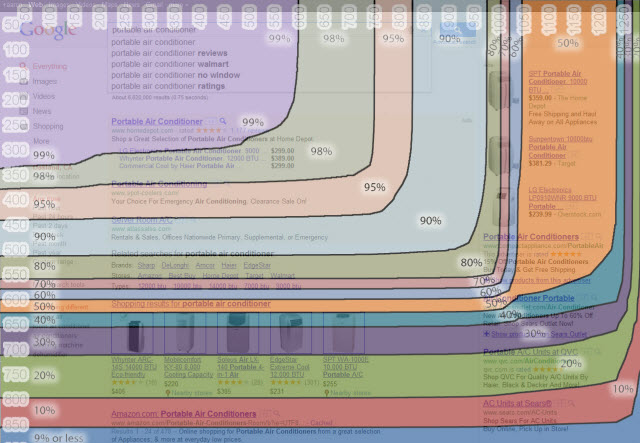

Portable air conditioners is a pretty niche category, but when I look at it I simply don't see any opportunity on the SEO front unless you take on the significant risk of carrying inventory & drop hundreds of thousands to millions of Dollars on branding.

The Corporate, The Bad & The Ugly

Head keyword: note the brand navigation, the extended AdWords ads & the product search results that drive the traditional search results below the fold

Tail keywords are every bit as ugly, with Google product ads sometimes coming in inline, further driving down the organic search results.

And it is nastier when Google Instant is still extended. 10% of browsers can see a single organic search result!

Corporate, Corporate, Corporate

As ugly as that looks, not only do the larger merchants have an advantage in AdWords (getting their product ads on a CPA basis while smaller merchants have to pay on a CPC basis), Google product search (more reviews), inline search navigation options (featuring the same brands yet again), but most of the organic results (that are generally below the fold) are also the same big brands after the Panda update gave them a boost while torching their smaller competitors.

The Chicken vs Egg Problem of Scale

For online pure-plays (outside of Amazon.com, eBay & a few others) the "no opportunity anywhere" problem in search also harms the ability to be competitive on pricing because without the ability to rank you don't have the leverage over the supply chain the way that the big box stores do from winning everywhere in the SERP & having offline distribution. There is little opportunity to organically grow to scale over time unless you enter the market with some point of leverage (like going so far as creating the product right on through to marketing it to consumers), sell something totally different than what is already available in the market (and hope it doesn't get cloned), buy out an existing company that went bankrupt, and/or build significant non-search distribution channels first.

I suppose the last option on that front would be to promote your stuff on a large platform that is already doing well in Google (say eBay, Amazon, or Facebook), but doing that gives you limited control over the customer experience & forces you to keep chasing new one-off sales rather than building & deepening relationships with customers.

Killing Off Diversity

As Google collects more usage date (mobile is already 12% of search) these big box stores will have an even bigger moat between them & smaller competitors.

The "big box stores only" search results also create an experience that is bland & uniform. At first glance things may look different, but it is the same type of sites again and again: a lot of the brands cross hire, have similar "politically correct" cultures & have roughly similar customer experience sets. When you buy from Walmart you are not going to get that caring email from a founder offering hands on tips & advice. Scale requires homoginization, which generally kills of personality & differentiation.

Killing Off Innovation

The problem with the "be huge or die" approach to search is that most legitimate economic innovation comes from smaller players that challenge the existing power structure. Set the barrier to entry too high and you might have less spam to fight, but you certainly will have less economic innovation & more of the would-be innovators will be stuck working dead end job at dysfunctional corporations.

Now You See it, Now You Don't

Most people can't see what they are missing out on so they won't know, but (as Tim Wu put it so eloquently in The Master Switch) the same was true for AT&T when it held back innovations like the answering machine & what ultimately came to be the WWW. What sort of price do you put on email taking a decade longer to launch? How many other disruptive changes built off of incremental improvements will never appear because they simply weren't large & corporate enough to compete on Google's web?

The web was great because it offered something different. Unfortunately you have to search using something other than Google to find it.

Source: http://www.seobook.com/ecommerce-seo-google-adwords

use seo seo tools seo tool land seo leaders online traffic mystic

Google Toolbar For Firefox

Source: http://www.google.com/tools/firefox/toolbar/FT3/intl/en/

seo tools seo tool land seo leaders online traffic mystic good backlink

Free Broken Link Checker

Source: http://www.dead-links.com/check_links.php

link building use seo seo tools seo tool land seo leaders online

Biyernes, Oktubre 28, 2011

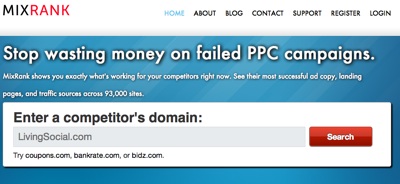

Increase Your Profits with MixRank's New Competitive Research Tool

Not many spy tools out there do what MixRank does. MixRank is a tool that gives you the ability to peek into the contextual and display ad campaigns of sites advertising with Google AdSense.

Uncovering successful advertising on the AdSense network can give you all sorts of ideas on how to increase your site's profitability.

Not only can you uncover profitable AdSense ad campaigns but you can pick off AdSense publisher sites and leverage competitive research data off of those domains to help with your SEO campaign.

With MixRank own your competitors in the following ways:

- Obtain the domains your competitor's ads are served on

- Swipe your competitor's ad copy

- Watch ad trends to target your competition's most profitable campaigns and combinations of ads

Another great thing about MixRank is how easy to use it is. Let's go step by step and see how powerful MixRank really is!

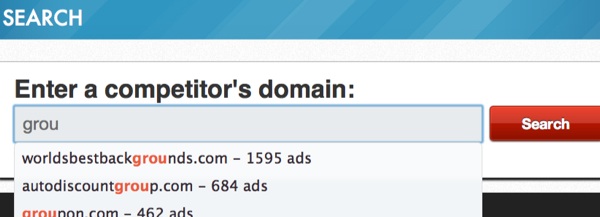

Step 1: Pick a Competitor to Research

MixRank makes is super easy to get started. Just start typing in a domain name and you'll see a suggested list of names along with the amount of ads available:

Here we are going to take a look at Groupon as we consider building a niche deals site. Keep in mind that MixRank is currently accepted free accounts while in beta so over time we can expect their portfolio to grow and grow.

MixRank breaks their tool down into 2 core parts:

- Ads (text and display)

- Traffic Sources

We'll cover all the options for both parts of the MixRank tool in the following sections.

Step 2: Working with Ad Data (Text and Display)

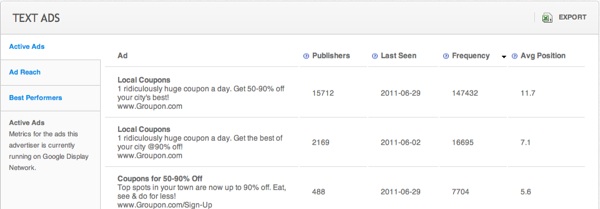

Let's start with text ad options. So with text ads you have 3 areas to look at:

- Active Ads

- Ad Reach

- Best Performers

Here's a look at the interface:

As you can see, it is really simple to switch between different ad research options. Also, you can export all the results at any time.

The image above is for "Active Ads". In the active ads tab you'll get the following data points (all sortable):

- Publishers - maximum number of AdSense publishers running that particular ad

- Last Seen - last known date the ad was seen by MixRank

- Frequency - amount of publisher sites on which the ad appeared

- Avg. Position - average position of the ad inside AdSense blocks

Here you can export the data to manipulate in excel or do some sorting inside of MixRank to find the ads earning the lion's share of the traffic.

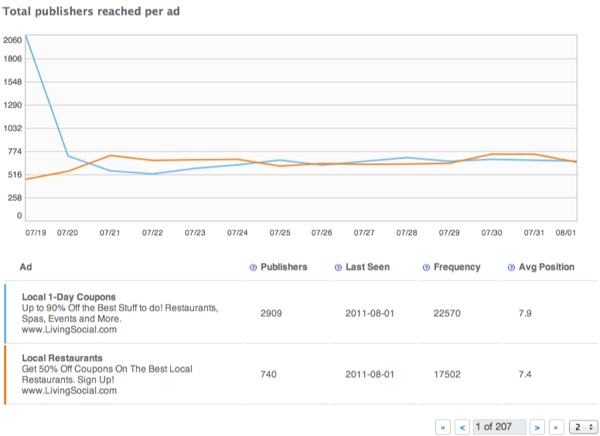

The Ad Reach tab shows up to 4 ads at a time and compares the publisher trends for those ads. To spread the love around let's look at a couple ads from LivingSocial.Com:

Here you can see that one ad crashed and fell more in line with an existing ad. You can compare up to 4 ads at once to get an idea of what kind of ad copy is or might be working best for this advertiser.

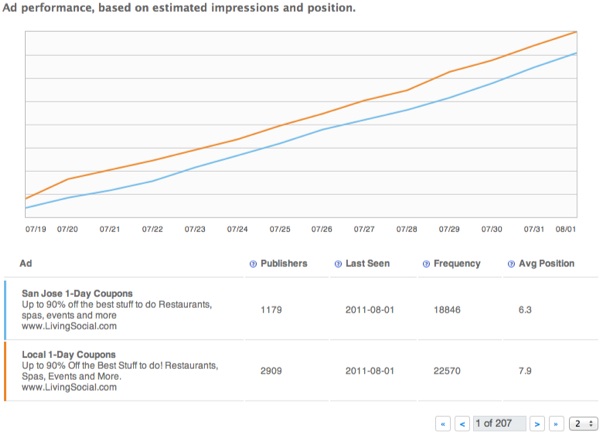

The Best Performers section compares, again, up to 4 ads at a time (use the arrows to move on to the next set) which have recently taken off across the network.

Needless to say, this report can give you ideas for new ad approaches and maybe even new products/markets to consider advertising on.

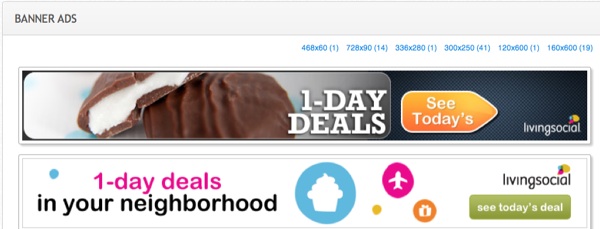

If the advertiser is running Banner Ads you can see those as well:

With Banner Ads, MixRank groups them by size and you can see all of them by clicking on the appropriate size link.

When you click on a banner ad you'll see this:

This is a good way to get ideas on which banner ads are sticking for your competitors. Also, it's a great way to get ideas of how to design your ads too. A little inspiration goes a long way :)

So that's how you work with the Ads option inside of MixRank. One thing I dig about MixRank is that it's so easy to use, the data is easy to understand and work with, and it does its intended job very well (ok, ok so 3 things!)

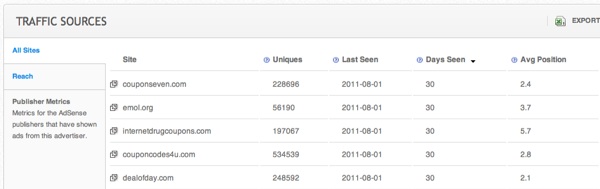

Step 3: Traffic Sources

Now that you have an idea of what type of text ads and banner ads are effective for your competition, it's time to move into what sites are likely the most profitable to advertise on.

MixRank gives you the following options with traffic sources:

- Traffic Sources - domains being advertised on, last date when the ad was seen, average ad position and number of days seen over the last month

- Reach - total number of publishers the advertiser is running ads on

The traffic sources tab shows:

- Uniques - estimated number of unique visitors based on search traffic estimates

- Last Seen - last date MixRank saw the ad

- Days Seen - number of days over the last month MixRank saw the ad

- Average Position - average position in the AdSense Block

A winning combination here would be recent last seen dates and a high number under the Days Seen category. This would be the advertiser has been and is running ads on the domain, indicating that it may be a profitable spot for them to be in.

You can also pull these domains into a competitive research tool like our Competitive Research Tool, SemRush, SpyFu, or KeywordSpy and find potential keywords you can add to your own SEO campaign.

Another tip here would be to target these domains as possible link acquisition targets for your link building campaign.

The Reach option is pretty self-explanatory; it shows the total number of publishers the advertiser is showing up on:

Another good way to evaluate traffic sources is to view the average position (remember, all the metrics are sortable). A high average position will confirm that the ads are pretty well targeted to the content of that particular domain.

Combine the high average position with Days Seen/Last Seen and you've got some well-targeted publishers. You can export all the data to excel and do multiple filters to bring the cream of crop to the top of your ad campaign planning.

MixRank is Looking Good

It's early on for MixRank but so far I like what I see. The tool can do so many things for your content network advertising, media buy planning, link building campaigns, and SEO campaigns that I feel it's an absolute no-brainer to sign up for right now.

For now it's *free* during their beta testing. Currently they are tracking about 90,000 sites so it's still fairly robust for being a new tool.

Source: http://www.seobook.com/mix-rank

seo tool land seo leaders online traffic mystic good backlink see book

One Way Link Verify

Source: http://www.webuildpages.com/tools/internet-marketing-page.htm

Blog SEO-learn more about SEO tips

PageRank Search Prog

Advanced Google Search Methods

Source: http://www.algotech.dk/googlesearches.asp

seo tools seo tool land seo leaders online traffic mystic good backlink

Algorithmic Journalism & The Rise of Corporate Content Farms

The "Best" of Big Media

Large publishers who lobbied Google hard for a ranking boost got it when Panda launched:

?A private understanding was reached between the OPA and Google,? an office assistant with e-mail evidence told Politically Illustrated. ?The organization is responsible for coordinating legal and legislative matters that impact our members, and one of the issues was applying pressure to Google to get them to adjust their search algorithm to favor our members.?

At the same time, said "premium publishers" were backfilling their websites padding them out with auto-generated junk created by companies like Daylife, where some of the pages offer Mahalo-inspired 100% recycled content.

My suspicion is that Google did not care about the auto-generated "news" garbage for a number of reasons

- it helps subsidize the big media interests

- they don't want to hit big media & cause a backlash

- it is quite easy for Google to detect & demote whenever they want to

- it gives Google more flexibility going forward when deciding how to deal with issues (if everyone is a spammer then Google has more flexibility in deciding how to handle "spam" to maximize their returns.)

It is the exact same reason that Google says link buying is bad, while tolerating "sponsored features" sections on large newspapers:

Machine Generated Journalism

Where Google winds up in trouble on this front is when start ups that create machine generated content go mainstream. (Unless Google buys them, then it is more free content for Google!)

The leaders of Narrative Science emphasized that their technology would be primarily a low-cost tool for publications to expand and enrich coverage when editorial budgets are under pressure. The company, founded last year, has 20 customers so far. Several are still experimenting with the technology, and Stuart Frankel, the chief executive of Narrative Science, wouldn?t name them. They include newspaper chains seeking to offer automated summary articles for more extensive coverage of local youth sports and to generate articles about the quarterly financial results of local public companies.

Official sources using "automated journalism" is a perfect response to Google's brand-focused algorithms:

Last fall, the Big Ten Network began using Narrative Science for updates of football and basketball games. Those reports helped drive a surge in referrals to the Web site from Google?s search algorithm, which highly ranks new content on popular subjects, Mr. Calderon says. The network?s Web traffic for football games last season was 40 percent higher than in 2009.

How expensive cheap is that technology?

The above linked article states that "the cost is far less, by industry estimates, than the average cost per article of local online news ventures like AOL?s Patch or answer sites, like those run by Demand Media."

Once again, even the lowest paid humans are too expensive when compared against the cost of robots.

And the exposure earned by the machine-generated content will be much greater than Demand Media gets, since Demand Media was torched by the Panda update AND many of the sites using this "algorithmic journalism" were given a ranking boost by Google due to their brand strength.

The improved cost structure for firms employing "algorithmic journalism" will evoke Gresham's law. This starts off on niche market edges to legitimize the application, fund improvement of the technology & "extend journalism" but a couple years into the game a company that is about to go under bets the farm. When the strategy proves a winner for them, competing publishers either adopt the same or go under.

That is the future.

Across thousands of cities, millions of topics & billions of people.

Even More Corporate Boosts

Just because something is large does not mean it is great across the board. Businesses have strengths and weaknesses. Sure I do like love shopping on eBay for vintage video games, but does that mean I want to buy books from eBay? Nope.

Likewise, Google's friend of a friend approach to social misses the mark. Do I care that someone I exchanged emails with is a fan of an athlete who promotes his own highlight reels? No I do not.

In a world where machine generated journalism exists, I might LOVE one article from a publication while loathing auto-generated garbage published elsewhere on the same site.

Line Extension & "Merging Without Merging"

At Macworld in 2007 Eric Schmidt said "What I liked about the new device and the architecture of the Internet is you can merge without merging. Each company should do the absolutely best thing they can do every time, and I think he's shown that today."

If you don't have the ability to algorithmically generate content to test new markets then one of the best ways to "merge without merging" is to sell traffic to partners via an affiliate program.

Google has no problem promoting their own affiliate network, investing in other affiliate networks, or inserting themselves as the affiliate.

Google is also fine with Google scraping 3rd party data & creating a content farm that inserts themselves in the traffic stream. After they have damaged the ecosystem badly enough they can then buy out a 2nd or 3rd tier market player for pennies on the Dollar & integrate them into a Google product featured front & center. (It is not hard to be better than the rest of the market after you have sucked the profits out of the vertical & destroyed the business models of competitors).

Others don't have the ability to arbitrarily insert themselves into the traffic stream. They have to earn the exposure. But if other people want to play the affiliate game, they need to have "brand."

Affiliates Not Welcome in the Google AdWords Marketplace

At Affiliate Summit last year Google's Frederick Vallaeys basically stated that they appreciated the work of affiliates, but as the brands have moved in the independent affiliates have largely become unneeded duplication in the AdWords ad system. To quote him verbatim, "just an unnecessary step in the sales funnel."

In our free SEO tips we send new members I recommend setting up AdWords and adCenter accounts to test traffic streams, so that you have the data needed to know what keywords to target. But affiliates need not apply:

Hello Aaron Wall,

I just signed up for the Get $75 of Free AdWords with Google Adwords. After receiving an e-mail stating that I was to call an 877 number of Google Adwords, I was told in my phone call that affiliate marketing accounts were not accepted. I guess I confused by this statement. Is this in error? Or am I not understanding the Tip #3 for setting up an account for Google Adwords for promoting a website?

Thank you in advance for your time.

Sincerely,

Carole

The same Google which allows itself to shamefully carry a "get rich quick" AdSense category considers affiliate marketing unacceptable.

Non-AdSense Affiliates Classified as Doorway Pages, Not Welcome in the Organic Search Results?

The exact same thing is happening in the organic search results right now. Maybe not on your keywords & maybe not today, but if you are an affiliate, the trend is not your friend. ;)

I have heard recently from multiple friends that some of their affiliate sites were penalized for being doorway & bridge pages. At the same time, another friend showed me some BeatThatQuote affiliates ranking thin websites.

What is worse, is that in many instances, Google considers networks of similar sites to be spam. Yet at the same time the quickly growing Google Ventures is investing in companies like Whaleshark Media - a roll up currently consisting of 7 *exceptionally* similar websites in the same vertical.

Larger companies like BankRate can run a half-dozen credit card affiliate websites & an affiliate network. And they can create risk-adjusted yield by buying out smaller competitors, largely because Google won't penalize them based on the site being owned by a fortune 500. However the independent affiliate is forced to sell out early due to the risk that Google can arbitrarily decide they are a doorway site at anytime.

The absurd thing is that if independent webmasters don't include revenue generation in their website then they don't have the capital *required* to invest in brand & further improving their website. How do you compete against automated journalism when Google gives the automated content a ranking boost? And if you want to do higher quality than the machine generated content, how do you hire employees if you are not even allowed to monetize?

I suppose there is AdSense.

Even though AdSense publishers are Google's affiliates they are still welcome to participate in Google's ecosystem.

Risks to Small Businesses

Small businesses not only have to compete against algorithmic journalism, Google's algorithmic bias toward brands, arbitrary "doorway page" editorial judgements cast against them by engineers & significant algorithm changes, but they also have to deal with loopholes Google leaves in the system that allow them to be arbitrarily removed from the ecosystem.

Google showing you "closed in error" wouldn't be such a big deal if they didn't copy code, violate patents, deal in patents to spread their ecosystem, aggressively bundle & advertise, engage in price dumping, and behave in other anti-competitive ways to put their "incorrect facts" in front of billions of people.

The big issue Google is facing on the content quality front is the incentive structure. They have got that wrong for a long time now. They may think that these big changes are motivating people to improve quality, but realistically the lack of certainty is prohibiting investment in real quality while ramping investment in exploitation.

How can anyone invest deeply over the long term in a search ecosystem where Google...

- competes against advertisers in the ad auction

- hosts content that they automatically preferentially insert into their search results

- actively invests in companies that arbitrage their search results, and

- considered running an SEO firm?

Google would spin Performics out of DoubleClick, and sell it to holding firm Publicis.

Only one major force inside of Google hated the plan. Guess who? Larry Page.

According to our source, Larry tried to sell the rest of Google's executive team on keeping Performics.

"He wanted to see how those things work. He wanted to experiment."

The problem with that is that most honest economic innovation (eg: not just exploitation) comes from small businesses. Going into peak cheap oil where food riots are becoming more common & pensions are about to blow up, we need the kings of information to encourage innovation, rather than relying on doing whatever is easy & trusting established old leaders while retarding risk taking from (& investment in) start ups.

In some markets being successful means staying small, building deeper into a niche, and keep adding value until you have a strong position. However some ecommerce sites that were not associated with big brands were torched by the Panda update.

Betting on Brand

As Google has tilted their algorithm toward brand, some ecommerce companies that focused on winning relevant niches are now watering down their competitive advantages by betting the company on brand:

CSN Stores is today consolidating its 200+ shopping sites into a single ecommerce website under one brand: Wayfair.com.

...

So why the change to Wayfair.com? Primarily for obvious branding reasons: the company has long been spending a huge amount of money on marketing a lot of separate websites, and now they can focus on advertising just one.

...

Other reasons for the consolidation of the separate shopping site are search engine optimization ? which was apparently much needed after Google?s recent Panda update ? and the fresh ability to make recommendations to shoppers based on their collective purchase history.

But, as some brands abuse Google the same way the content farms did, is that a good bet? I don't think it is.

What is so Bad About Content Farms?

- low quality

- headline over-promises, content under-delivers

- anonymously written

- written by people who are often ignorant of what they are writing about

- add nothing new to the ecosystem, just a dumbed-down reshash of what already exists

- done cheaply & in bulk, in a factory-line styled format

- contains frequent spelling and grammatical errors

- primarily focused on pulling in traffic from search engines

- exists primarily to promote something else (ads or the above-the-fold ecommerce product listings)

- etc. etc. etc.

Such behavior is *not* unique to the sites that were branded as content farms & is quickly spreading across fortune 500 websites.

Big Brands Become Content Farms

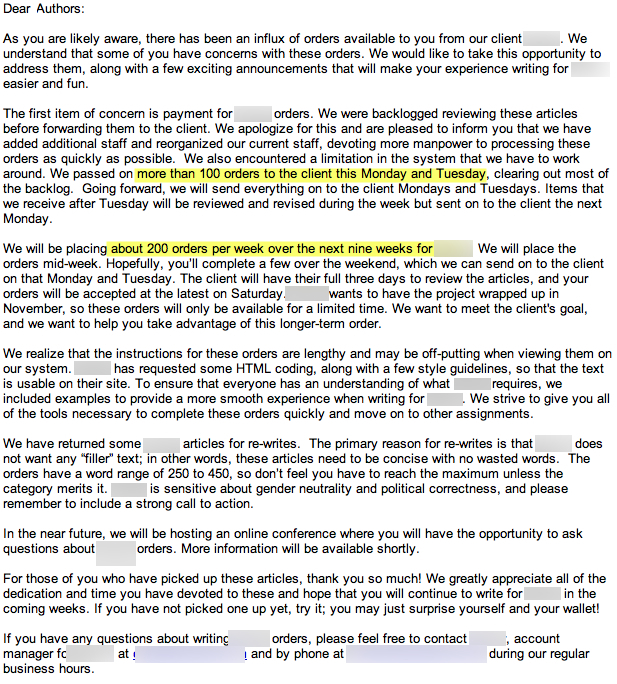

A friend sent me an email which highlighted how a well-known brand was ordering thousands of pieces of "content" in bulk for their branded site.

Here is the email, with blurring to protect the guilty.

The only difference between the "content farms" and the branded sites engaging in content farming is the logo up in the top-left corner of the page. The business process from how the content is created, to who it is created by, to what they are paid to create it, to the interface it is ordered through, on to how it is published is exactly the same.

Many of the same authors who had some of their eHow "articles" deleted are now writing dozens of "articles" for fortune 500 websites.

When Panda happened & I saw corporate doorway pages (& recycled republished tweets) ranking I hinted that we could expect this problem. I thought it would start with parasitic hosting on branded sites & maybe a few opportunistic brand extensions.

Then I expected it would likely take a couple years to go mainstream.

But with the economy being so weak (and back in yet another undeclared recession, actually honestly never having left the last one) this shift only took 6 months to happen. At this point I expect it to spread quickly, especially as the economy gets worse. The above fortune 500 company is one that got a strong boost from Panda & as their downstream traffic from Google picks up over the next month or 2 you can expect many of their competitors to copy the strategy.

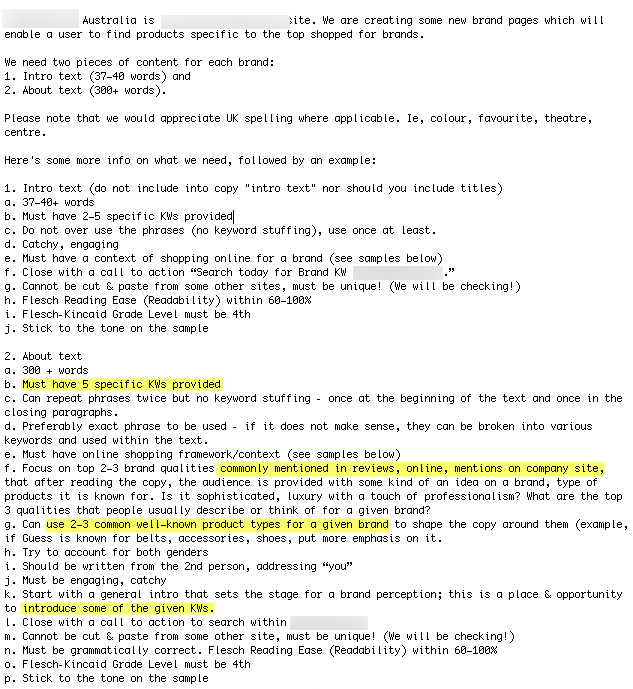

This isn't a US-only phenomena. A community member sent me the following, from another fortune 500 company.

Now that fortune 500s are doing almost everything that smaller players could do (but with more capital, more scale, more algorithmic immunity, requiring smaller players to link to them to be listed & in some cases while replacing humans with algorithms) AND get the Google brand boost the future is growing more uncertain for independent webmasters that lack brand, relationships, and community.

Big brands are basically pushed across the finish line while smaller webmasters must run uphill with a 80 pound backpack full of gear - in ice & snow, naked, while being shot at. What's worse, is that brands are now being bought, sold & licensed - just one more tool in the marketer's toolbox (presuming you have the cash).

disclaimer: I am not saying that all content farming is bad (I am fairly agnostic...if it works & people like it, then it works), but the above trend highlights the absurdity of Google's notion of whether something is spam based not on the offense, but rather who is doing it, especially as big brands just quietly turned into content farms.

Source: http://www.seobook.com/corporate-content-farming

seo leaders online traffic mystic good backlink see book link building

Huwebes, Oktubre 27, 2011

Search Engine Ranking Report

Source: http://www.top25web.com/cgi-bin/report.cgi

link building use seo seo tools seo tool land seo leaders online

Link Value

DNS Report

Source: http://www.dnsreport.com/

Keyword Generator

Source: http://www.espotting.com/popups/keywordgenbox.asp

seo tools seo tool land seo leaders online traffic mystic good backlink

Google Suggest

Source: http://www.google.com/webhp?complete=1

link building use seo seo tools seo tool land seo leaders online

Miyerkules, Oktubre 26, 2011

Google Brand Bias Reinvigorates Parastic Hosting Strategy

Yet another problem with Google's brand first approach to search: parasitic hosting.

The .co.cc subdomain was removed from the Google index due to excessive malware and spam. Since .co.cc wasn't a brand the spam on the domain was too much. But as Google keeps dialing up the "brand" piece of the algorithm there is a lot of stuff on sites like Facebook or even my.Opera that is really flat out junk.

And it is dominating the search results category after category. Spun text remixed together with pages uploaded by the thousand (or million, depending on your scale). Throw a couple links at the pages and watch the rankings take off!

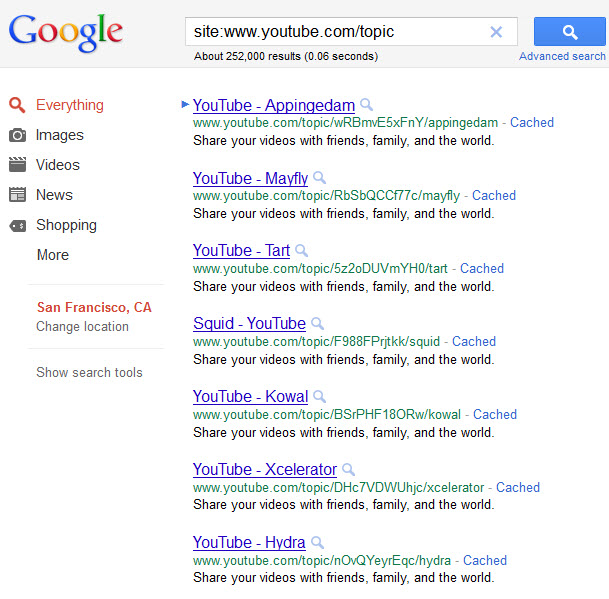

Here is where it gets tricky for Google though...Youtube is auto-generating garbage pages & getting that junk indexed in Google.

While under regulatory review for abuse of power, how exactly does Google go after Facebook for pumping Google's index with spam when Google is pumping Google's index with spam? With a lot of the spam on Facebook at least Facebook could claim they didn't know about it, whereas Google can't claim innocence on the Youtube stuff. They are intentionally poisoning the well.

There is no economic incentive for Facebook to demote the spammers as they are boosting user account stats, visits, pageviews, repeat visits, ad views, inbound link authority, brand awareness & exposure, etc. Basically anything that can juice momentum and share value is reflected in the spam. And since spammers tend to target lucrative keywords, this is a great way for Facebook to arbitrage Google's high-value search traffic at no expense. And since it pollutes Google's search results, it is no different than Google's Panda-hit sites that still rank well in Bing. The enemy of my enemy is my friend. ;)

Even if Facebook wanted to stop the spam, it isn't particularly easy to block all of it. eBay has numerous layers of data they collect about users in their marketplace, they charge for listings, & yet stuff like this sometimes slides through.

And then there are even warning listings that warn against the scams as an angle to sell information

But even some of that is suspect, as you can't really "fix" fake Flash memory to make the stick larger than it actually is. It doesn't matter what the bootleg packaging states...its what is on the inside that counts. ;)

When people can buy Facebook followers for next to nothing & generate tons of accounts on the fly there isn't much Facebook could do to stop them (even if they actually wanted to). Further, anything that makes the sign up process more cumbersome slows growth & risks a collapse in share prices. If the stock loses momentum then their ability to attract talent also drops.

Since some of these social services have turned to mass emailing their users to increase engagement, their URLs are being used to get around email spam filters

Stage 2 of this parasitic hosting problem is when the large platforms move away from turning a blind eye to the parasitic hosting & to engage in it directly themselves. In fact, some of them have already started.

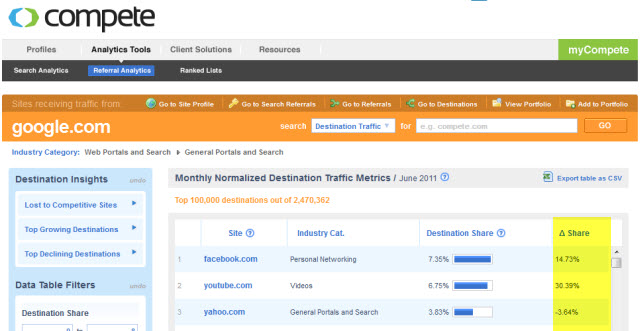

According to Compete.com, Youtube referrals from Google were up over 18% in May & over 30% in July! And Facebook is beginning to follow suit.

Source: http://www.seobook.com/parastic-hosting

seo tool land seo leaders online traffic mystic good backlink see book

Mozdev GoogleBar

Source: http://googlebar.mozdev.org/

seo leaders online traffic mystic good backlink see book link building

Domain Dossier

Source: http://centralops.net/co/DomainDossier.aspx

use seo seo tools seo tool land seo leaders online traffic mystic

The Power of SEO backlinks

-With many backlink profiles are created on other high pagerank site, that mean your backlink is high PR backlink, so your backlinks is truly valueable and are highly appreciated in the search engines.

- With only this method, your website ranking will be improved in search engines and your traffics will be increase very fast.

-All of your backlink profiles will be auto indexed to search engines.

-It only takes a small fee.You'll have more chance to try the greatest drilling substantial profit.

-Crawled quickly.

Source: http://goodbacklink.info

Similar Page Checker

Martes, Oktubre 25, 2011

Datacenter Quick Check

Future PageRank Tool

Source: http://www.seochat.com/seo-tools/future-pagerank/

seo tool land seo leaders online traffic mystic good backlink see book

Search Engine Spiders

Source: http://www.searchwho.com/sw5-spider.html

seo tool land seo leaders online traffic mystic good backlink see book

SMX East 2011 Recap for SEOBook

Useful Links:

SMX Facebook: http://www.facebook.com/searchmarketingexpo

Twitter # activity for the conference: http://twitter.com/#!/search/%23smx

Another successful SMX East is in the books. From all accounts, the event seemed to go through flawlessly and without a hitch. Kudos to Danny Sullivan, Claire Schoen, and crew as the caliber of speakers, sessions, and attendees was top notch, as always. Judging from the event, search marketing is alive and thriving more than ever before. There was a healthy mix of industry experts, consultants, large corporations, agencies, and small businesses. The sessions covered a broad range of topics from beginner link building fundamentals to more advanced technical SEO sessions covering site architecture, technical coding optimization and everything inbetween. A huge thank you goes out to the organizers for a job well done.

It seemed there were two themes that surfaced regularly - Panda and Google Plus/+1. Clearly, there are still many webmasters struggling with Panda and how to properly handle content in the new post-Panda world . The search engines are addressing this and giving webmasters and SEO?s more tools and information to organize their websites correctly. After some of the presentations, it seems Google is very dedicated to their Plus and +1 initiatives which will have a large affect on SEO should end user usage continue to increase.

Below are tidbits and takeaways from the conference, from an SEO perspective. Enjoy!

Schema.org, Rel=Author & Meta Tagging For 2012

Panelists:

Janet Driscoll Miller, Search Mojo http://twitter.com/#!/janetdmiller

Topher Kohan, CNN https://twitter.com/#!/Topheratl

Jack Menzel, Google http://twitter.com/#!/jackm

Microformats where the original snippet format, however, they have been replaced by the new and evolving standard, microdata (which is Schema.org/Google/Bing are developing for and placing resources towards). Some notes from the presentations:

- General consensus is rich snippets can greatly help in getting your content noticed.

- In one example given, Eatocracy added the hRecipe tag to their pages, and immediately saw a 47% increase in their recipes being picked up and indexed into Google (which does support this in their recipe search). Additionally, they saw a 22% increase in their recipe traffic.

- CNN started using Yahoo SearchMonkey / RDFa, and saw a 35% increase in their video content on Google Video search, and saw a 22% increase in overall search traffic. However, they removed the additional code from their site as it increased their page load time. The take away on that is that you should think to integrate this into your down dev cycle, your cms, or your template.

- Per Google, their studies show that sites w/ rich snippets have a better CTR as well. Rich Snippets Engineer at Google, RV Guha noted, ?From our experiments, it seemed that giving the user a better idea of what to expect on the page increases the click-through rate on the search results. So if the webmasters do this, it?s really good for them. They get more traffic. It?s good for users because they have a better idea of what to expect on the page. And, overall, it?s good for the web.?

- Rich snippets only work for one site (no cross site references).

- Sites like LinkedIn and Google Profiles still use microformats. Google has also provided a tool in WMT, but it is a bit buggy and may throw false errors. If you don?t see your snippets show up in the SERP?s, it?s likely caused by longer than preferred latency load times, errors in your code, or a random Google bug - (per Google).

- The current types of rich snippets: reviews, people, products, businesses & organizations, recipes*, events, music

Session - ?Ask the Search Engines?

Panelists:

Tiffany Oberio - Google http://twitter.com/#!/tiffanyoberoi

Duane Forrester - Bing http://twitter.com/#!/duaneforrester

Rich Skrenta - Blekko http://twitter.com/#!/skrenta

- One audience member asked how to handle ?subcategory? pages that are often created in ecommerce sites such as ?Sort Prices $0-$5?, ?Prices $5-$25? etc. The question was whether or not to use the ?rel=canonical? tag and point the pages back to the main page. The panelists agreed that those pages should be blocked completely and should not use the canonical tag. The Google representative said not only do these pages not add value to the engine?s index, but they also eat up the sites crawl budget.

- If you see the warning "we're seeing a high # of URL's" in Webmaster Tools, most times its a duplicate content issue.

- One audience member asked: do you look at subdomain as part of the main domain?

- Blekko - no inheritance from main domain

- Google - "it depends". Sometimes it is inherited, sometimes not.

- Bing - we look and try to determine if subdomain is a standalone business/website and will get treated differently based on that determination

- One question touched on removing URL?s from Google?s index. Google advised that a removed URL may or may not stay in the index for a period of time, and that to expedite removal of a URL one should use Webmaster Tools remove-url tool

- Duane from Bing was adamant about keeping your submitted sitemap clean. The threshold is 1%. If there are issues in your submitted sitemap >1%, Bing will ?lose trust? for your website

- Panelists advised to make your 404 pages useful to the user

- It may not be breaking news, but Bing and Google both said unequivocally - duplicate content does hurts you

- Google commented they are big fans of HTML 5 technology

- At this point it seems Google will crawl a page if +1 is present, regardless of the robots.txt. This could possibly create issues with trying to not crawl certain pages to avoid dup content. More information found here: http://www.webmasterworld.com/google/4358033.htm

- Panelists advised to spend a lot of energy ?containing urls? on your website and to be thoughtful about which URLs you are getting out there

- Bing and Google confirmed that ?pagerank sculpting? is misunderstood and not effective. For example, if a page has 5 outgoing links and link juice is spread 20% to each of the 5 links, if you no follow one of the links, the link juice distribution will not become 25% to the remaining 4 links. It will remain 4 x 20%. In essence, you have just evaporated potential link juice

Google Plus and +1

These were hot topics at this year?s SMX East. Multiple session covered Google Plus and +1 in depth.

- Speaker Benjamin Vigneron from Esearchvision covered the basics of Google Plus and +1 . He noted a +1 to a search result will +1 the ppc ad/landing page, too.

- With PPC, +1 could have a significant affect on Adrank by affecting each of the Quality Score factors including quality of the landing page, CTR, and the ad?s past performance.

- Interesting that Adwords could conceivably add segmenting on all information in Google Plus (similar to FB) ie males, ages, etc.

- Christian Oestlien, the Google Product Manager for Google Plus, spoke about Google Plus features and fielded questions. He mentioned Google is testing and experimenting with celebrity endorsements +1'ing and showed an example SERP with a +1 annotation under the search result (for example ?Kim Kardashian has +1?ed? Brand X or search result X). He noted Google is seeing much higher CTR with the +1 annotation and that usage for the ?Circles? feature is relatively high.

- Google software engineer Tiffany Oberoi was also present on the panel. She noted +1 is NOT a ranking factor, but social search is still of course implemented in search results. She confirmend Facebook likes have no impact on rankings but also noted regarding social signals, ?explicit user feedback is like gold for us". She also touched on spam with +1 and said she is currently working with spam team. Regarding +1?s and spamming, she said to think of +1?s similarly to links. The same guidelines could apply. Google wants to use them as a real signal. Using in an unnatural way will not good for you.

Hardcore Local Search Tactics

Panelists:

Matt McGee - Search Engine Land

Mike Ramsey, - Nifty Marketing

Will Scott - Search Influence

Panelists here gave an encore presentation of the session these folks put on at SMX Advanced in Seattle. The content was excellent and definitely deserved another run through. Here are the notes:

- July 21st, Google removed citations from their Places listings. While they have been removed for public viewing, they are still used. Sources like Whitespark (link: http://www.whitespark.ca/) can be very helpful in uncovering citation building opportunities.

- Citation accuracy is among the most important factors in getting your business to rank in the O or 7-Pack. Doing a custom Google search of ?business name?+?address?+?phone number? will help determine what other sources Google sees as citation sources.

- Average number of IYP reviews of ranked listings vs non ranked listings showed to be a large gap, indicating that IYP reviews do in fact provide quite a bit of listing weight.

- Offsite Citation?s / Data appear to be the no. 1 ranking factor in Places listings

- Linking Root Domains appear to be the no. 2 ranking factor in Places listings

- Exact match anchor links appear to be the no. 3 ranking factor in Places listings

- Links are the new citations for local in 2011-12

- Building a custom landing page to link your Places Listing to appears to be a huge success factor. Include your Name, Address, Phone (NAP) in the title tag

- Design that landing page to mirror a Places listing on their site w/ a map, business hours, contact data, etc.

- If needed, submit your contact/location page as your Places URL/Landing Page which will create a stronger geo scent

- When trying to understand how users are searching for your client, Insights for Search is a great tool as you can find Geo targeted data w/ KW differentiation (ie Lawyer vs Attorney, which is used more in that area)

- Local requires a different mindset from traditional SEO

- Optimize location (local SEO) vs Optimize websites (traditional SEO)

- Blended search is about matching them up

- PageRank of Places URL does NOT seem to affect Local ranking -(source: David Mihm)

- Multi-Location Tips

- Flat site architecture beginning w/ a ?Store Locator? page

- Great Example, lakeland.co.uk/StoreLocator.action

- Give each location its own page

- Great Example, lakeland.co.uk/stores/aberdeen

- Cross link nearby locations w/ geo anchor text

- Ensure the use of KML Sitemap in Google WMT

- Encourage Community Edits - Make Use of Google?s Map Maker

- Include Geo data in Facebook pages and article engines

Panda Recovery Case Study - High Gear Media

Speaker Matt Heist from High Gear Media covered their experiences over the past 8 months with recovering from Panda. High Gear Media is an online publisher of auto news and reviews.

Heist walked through the company?s strategy pre-panda and explained their contrasting new post-panda strategy. The original strategy was many auto review niche sites across a broad range of auto makes, models and manufacturers. The company originally had 107 sites and 20+ writers and dispersed content amongst all the sites. The content was "splashing" everywhere, unfocused. The ?large network of microsites? strategy was working and traffic was climbing each month. Then Panda hit - hard. Traffic plummeted beginning this past Spring. Leaders at High Gear was forced to reevaluate their strategy and concluded that a more focused approach was better for users and consequently would help search traffic recover.

High Gear took the following actions:

- Eliminated most of their properties completely (301'ed) and pared them down to 7 total sites with 4 being ?core?: FamilyCarGuide, Motorauthority, GreenCarReports, TheCarConnection.

- Properly canonicalized duplicate content

- Aggregated content with strong user engagement was KEPT, but not indexed

- The made the hard decision to eliminate content that could be making money but not good for the long term

- Dedicated significant resources to redesigning each of the 7 sites remaining sites

Their strategy seems to be working. Heist noted traffic has ?flipped, plus some?. According to Heist, here are the learning's:

- High Gear Media believes that premium content will prevail and that Panda will help that

- Advertisers like bigger brands - it is now easier to sell ads and for more $ with fewer, more powerful sites

- With evolution of Social (joining Search from a distribution perspective), premium content that is authoritative AND fresh with flourish

Raven Tools

We were able to meet up with the friendly staff over at Raven Tools, sit down with them, and learn a bit more about their product. We personally have been using Raven for about a year now, and highly recommend it. There are several features in the works that will make this even more of an incredible product. If you haven't used them, we would HIGHLY suggest giving the tools a run. They are partnering with new companies constantly, and as such, are building out a best in class seo management product.

Upcoming Features:

- A new feature they are working on is a Chrome Toolbar to compliment the current Firefox toolbar

- Another feature coming is ?templated messaging? for link requests and manual link building which will include BCC?s back to records. Templated Messaging will be built into our Contact Manager, but they are working on making that functionality available in the toolbar.

- Another upcoming features is file management. RavenTools engineers are looking at integrating Dropbox into the system to allow files to be associated with other data and records.

- The Co-Founder Jon Henshaw alluded many times to the idea that link building and consequently their toolset will continue to become more and more based on relationships in the future. He also alluded to the idea that traffic can or in some cases should be associated with PEOPLE as the referrer, rather than a website (ie x amount of traffic came from person A, whether it be their facebook, twitter, blog, or website). In other words, a relationship management system looks to be a integral part of the future of Raventools.

- For future updates, Raventools takes explicit user feedback greatly into account. If you have a feature request or a software integration request, please contact: http://raventools.com/feature-requests/

- A new feature called ?link clips? was released last week just before the SMX show. You can find details about this powerful update here; http://raventools.com/blog/new-feature-raven-link-building-with-link-clips/

- Regarding MajesticSEO and OSE/Linkscape, they will be more fully integrating it into the Research section of Raven. That means they?ll be adding as much functionality into Raven as their APIs will allow. In addition to getting more full access to that data, users will be able to easily add that data to other tools, like the Keyword and Competitor Managers, Rank Tracker, etc...

- Speed is the number one priority right now. They have full-time staff that are solely dedicated to speeding up the system. The goal is to make it run as fast as a desktop app.

- Long term - 3rd party integration will be a constant (and should accelerate) for the platform for the foreseeable future.

- Screenshot of "Social Stream" prototype design http://cl.ly/1b2h0u3P3U441w000o1K/o

- AdWords Insights: Flagged Pages: http://cloud.raven.im/9v8d

- Link Clips link checker results with historical results: http://cloud.raven.im/9zgK/o

Other Notes

- Regarding Panda, one panelist referenced what he called a website?s ?Content Performance Ratio? referring to the % of content on a site that is good versus bad or ?performing vs non performing? and using that as a gauge as to the health of a website.

- Panelists also noted in his experience it takes 3-4 requests on a 404 before search engine believes you and removes it from the index.

- Panelists in the ?Ask the SEO? session said to pay close attention to anchor text diversity and human engagement signals

Author bio:

Jake Puhl is the Co-Founder/Co-Owner of Firegang Digital Marketing, a Local search marketing company, specializing in all aspects "Local", including custom web design, SEO, Google Places, and local PPC advertising. Jake has personally consulted businesses from Hawaii to New York and everywhere in-between. Jake can be contacted at jacobpuhl at firegang.com.

Source: http://www.seobook.com/smx-east-2011-recap-seobook

link building use seo seo tools seo tool land seo leaders online

Five Tips for Your Online Promotional Strategy

Source: http://www.seo-leaders-online.co.za/seo/five-tips-for-your-online-promotional-strategy/

Lunes, Oktubre 24, 2011

SEO Follow and NoFollow

Source: http://www.mkpitstop.com/Art/104037/94/SEO-Follow-and-NoFollow.html

seo tools seo tool land seo leaders online traffic mystic good backlink

About us

Source: http://goodbacklink.info/about.html

link building use seo seo tools seo tool land seo leaders online

Reasons for Hiring a Professional SEO Expert for a Local Business

use seo seo tools seo tool land seo leaders online traffic mystic

Link Popularity Checker

Source: http://www.webmaster-toolkit.com/link-popularity-checker.shtml

link building use seo seo tools seo tool land seo leaders online

Robots.txt Generator

Source: http://www.123promotion.co.uk/tools/robotstxtgenerator.php

Linggo, Oktubre 23, 2011

How to Get More Views on YouTube

Source: http://www.trafficmystic.com/214/how-to-get-more-views-on-youtube/

Contact us

Source: http://goodbacklink.info

Keyword Difficulty Tool

Source: http://www.seomoz.org/tools/keyword-difficulty-tool.php

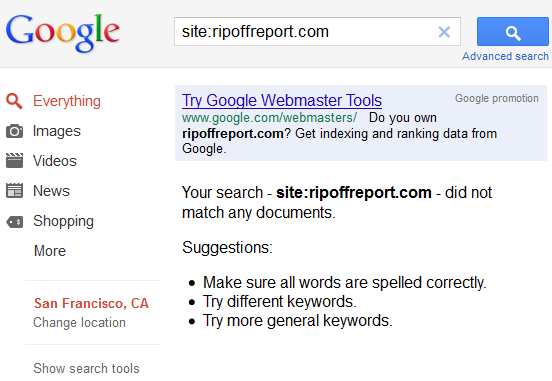

Google Rips Rip Off Report From The Search Results

We live in a culture where it is far more profitable to solve symptoms than it is to solve problems. As such, the disappearance of ripoffreport.com from Google's index probably has retainer-based reputation management firms like reputation.com singing the blues.

Ed Magedson, the owner of Rip Off Report, has been charged with RICO in the past and managed to come through unscathed, but he has never tackled an opponent as media savvy or powerful as Google.

He is pretty savvy with the legal system & the media, so it will be fun to watch how he responds to this one, as his business model relies relied on top Google rankings:

Attorney: So what I've gathered from all of your testimony, Dickson, is that Ed Magedson has indirectly told you that he is responsible for making posts about companies. He will make these posts.

Mr. Woodard: Yes.

Attorney: And then he will manipulate the search engines; is that true?

Mr. Woodard: No question about the search engines. That's where the money is made.

A new take on Will it Blend: can a vampire suck blood from another vampire?

Vampires have often found it advantageous to maintain a hidden presence in humanity?s most powerful institutions. In the 1600s, it was the Catholic church, and today, as you all know, it?s Google, Fox News.

Update: adding intrigue to the situation, it looks like the site was removed due to a request inside Google Webmaster Tools, but the folks from ROR claimed they didn't make the removal request: "Ripoff Report did not intentionally request Google to delist the website, and we are still investigating what occured."

Update 2: Looks like they are back ranking in Google again. Perhaps someone found yet another loophole with Google's URL removal feature.

Source: http://www.seobook.com/google-rips-rip-report-search-results

seo tool land seo leaders online traffic mystic good backlink see book

Weekly SEO Tips- Avoid Common SEO Mistakes

Sabado, Oktubre 22, 2011

Backlink Check Tool

Building cheap backlink profile today

-Quanlity backlinks, which are built from high PR website with PR 4+, not from spam website or website have bad content.

-100% profiles are publicly viewable,quality, permanent links.

-100% profiles do by hand manually.

-100% profiles are Do-follow.

-To make the backlink profiles natural, we alway change proxy frequently.

-The price is right for you that will effectively make you gain surprise.

In each package:

-All backlink profiles are sent to Ping tool, your backlink will be ping on more than 50 main ping sites which helps these profiles are indexed quicker (free service).

-All backlink profiles are created RSS feed and this feed will be submit into more than 70 RSS Feed site. It makes your backlinks be put into more sites (free service).

Source: http://goodbacklink.info

use seo seo tools seo tool land seo leaders online traffic mystic